Base model object used when to load a model exported by PaddleDetection. More...

#include <base.h>

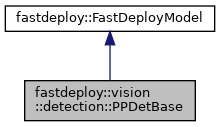

Inheritance diagram for fastdeploy::vision::detection::PPDetBase:

Collaboration diagram for fastdeploy::vision::detection::PPDetBase:

Public Member Functions | |

| PPDetBase (const std::string &model_file, const std::string ¶ms_file, const std::string &config_file, const RuntimeOption &custom_option=RuntimeOption(), const ModelFormat &model_format=ModelFormat::PADDLE) | |

| Set path of model file and configuration file, and the configuration of runtime. More... | |

| virtual std::unique_ptr< PPDetBase > | Clone () const |

| Clone a new PaddleDetModel with less memory usage when multiple instances of the same model are created. More... | |

| virtual std::string | ModelName () const |

| Get model's name. | |

| virtual bool | Predict (cv::Mat *im, DetectionResult *result) |

| DEPRECATED Predict the detection result for an input image. More... | |

| virtual bool | Predict (const cv::Mat &im, DetectionResult *result) |

| Predict the detection result for an input image. More... | |

| virtual bool | BatchPredict (const std::vector< cv::Mat > &imgs, std::vector< DetectionResult > *results) |

| Predict the detection result for an input image list. More... | |

Public Member Functions inherited from fastdeploy::FastDeployModel Public Member Functions inherited from fastdeploy::FastDeployModel | |

| virtual bool | Infer (std::vector< FDTensor > &input_tensors, std::vector< FDTensor > *output_tensors) |

Inference the model by the runtime. This interface is included in the Predict() function, so we don't call Infer() directly in most common situation. | |

| virtual bool | Infer () |

| Inference the model by the runtime. This interface is using class member reused_input_tensors_ to do inference and writing results to reused_output_tensors_. | |

| virtual int | NumInputsOfRuntime () |

| Get number of inputs for this model. | |

| virtual int | NumOutputsOfRuntime () |

| Get number of outputs for this model. | |

| virtual TensorInfo | InputInfoOfRuntime (int index) |

| Get input information for this model. | |

| virtual TensorInfo | OutputInfoOfRuntime (int index) |

| Get output information for this model. | |

| virtual bool | Initialized () const |

| Check if the model is initialized successfully. | |

| virtual void | EnableRecordTimeOfRuntime () |

| This is a debug interface, used to record the time of runtime (backend + h2d + d2h) More... | |

| virtual void | DisableRecordTimeOfRuntime () |

Disable to record the time of runtime, see EnableRecordTimeOfRuntime() for more detail. | |

| virtual std::map< std::string, float > | PrintStatisInfoOfRuntime () |

Print the statistic information of runtime in the console, see function EnableRecordTimeOfRuntime() for more detail. | |

| virtual bool | EnabledRecordTimeOfRuntime () |

Check if the EnableRecordTimeOfRuntime() method is enabled. | |

| virtual double | GetProfileTime () |

| Get profile time of Runtime after the profile process is done. | |

| virtual void | ReleaseReusedBuffer () |

| Release reused input/output buffers. | |

Additional Inherited Members | |

Public Attributes inherited from fastdeploy::FastDeployModel Public Attributes inherited from fastdeploy::FastDeployModel | |

| std::vector< Backend > | valid_cpu_backends = {Backend::ORT} |

| Model's valid cpu backends. This member defined all the cpu backends have successfully tested for the model. | |

| std::vector< Backend > | valid_gpu_backends = {Backend::ORT} |

| std::vector< Backend > | valid_ipu_backends = {} |

| std::vector< Backend > | valid_timvx_backends = {} |

| std::vector< Backend > | valid_directml_backends = {} |

| std::vector< Backend > | valid_ascend_backends = {} |

| std::vector< Backend > | valid_kunlunxin_backends = {} |

| std::vector< Backend > | valid_rknpu_backends = {} |

| std::vector< Backend > | valid_sophgonpu_backends = {} |

Detailed Description

Base model object used when to load a model exported by PaddleDetection.

Constructor & Destructor Documentation

◆ PPDetBase()

| fastdeploy::vision::detection::PPDetBase::PPDetBase | ( | const std::string & | model_file, |

| const std::string & | params_file, | ||

| const std::string & | config_file, | ||

| const RuntimeOption & | custom_option = RuntimeOption(), |

||

| const ModelFormat & | model_format = ModelFormat::PADDLE |

||

| ) |

Set path of model file and configuration file, and the configuration of runtime.

- Parameters

-

[in] model_file Path of model file, e.g ppyoloe/model.pdmodel [in] params_file Path of parameter file, e.g ppyoloe/model.pdiparams, if the model format is ONNX, this parameter will be ignored [in] config_file Path of configuration file for deployment, e.g ppyoloe/infer_cfg.yml [in] custom_option RuntimeOption for inference, the default will use cpu, and choose the backend defined in valid_cpu_backends[in] model_format Model format of the loaded model, default is Paddle format

Member Function Documentation

◆ BatchPredict()

|

virtual |

Predict the detection result for an input image list.

- Parameters

-

[in] im The input image list, all the elements come from cv::imread(), is a 3-D array with layout HWC, BGR format [in] results The output detection result list

- Returns

- true if the prediction successed, otherwise false

◆ Clone()

|

virtual |

Clone a new PaddleDetModel with less memory usage when multiple instances of the same model are created.

- Returns

- new PaddleDetModel* type unique pointer

◆ Predict() [1/2]

|

virtual |

DEPRECATED Predict the detection result for an input image.

- Parameters

-

[in] im The input image data, comes from cv::imread(), is a 3-D array with layout HWC, BGR format [in] result The output detection result

- Returns

- true if the prediction successed, otherwise false

◆ Predict() [2/2]

|

virtual |

Predict the detection result for an input image.

- Parameters

-

[in] im The input image data, comes from cv::imread(), is a 3-D array with layout HWC, BGR format [in] result The output detection result

- Returns

- true if the prediction successed, otherwise false

The documentation for this class was generated from the following files:

- /fastdeploy/my_work/FastDeploy/fastdeploy/vision/detection/ppdet/base.h

- /fastdeploy/my_work/FastDeploy/fastdeploy/vision/detection/ppdet/base.cc

1.8.13

1.8.13